Intro

So far, I have used Oracle AutoUpgrade, many times in 3 different OS’. Yet the more you think you’ve seen it all and reached the highest confidence level, another Upgrade will come byte you in the butt. The truth is every maintenance in any software or platform is unique, Oracle databases are no exception.

Automation will not solve all your problems which means organizations will still need humans when things get nasty.

This is my last series on AutoUpgrade troubleshooting as I anticipate reduced work on DBs in the near future. but I wanted to document few fixes to save your production upgrade from blowing up. Enjoy

AutoUpgrade is still the best

Despite some issues, AutoUpgrade remains the best option to upgrade databases to 19c and it’s easy to agree, after checking the below methods available to upgrade/migrate to 19c in this migration white paper.

The environment

In my case, I needed to migrate my 12c CDB to 19c, while preserving the Data Guard setup & reducing downtime.

| Platform | Source CDB database SI | Target CDB SI | Grid /ASM | Dataguard |

| Linux RHEL 8 | 12.1.0.2 Enterprise Edition | 19.17.0.0 Enterprise Edition | Yes | YES |

AutoUpgrade

| 19c jdk | autoupgrade.jar |

| 1.8.0_201 | Build.version 22.4.220712 |

The Upgrade strategy

While the upgrade process itself isn't covered here, I’ll mention the steps required to reproduce our AutoUpgrade in a Data Guard protected environment. If you want to look further into the steps, check out the excellent article by Daniel Overby Hansen called How to Upgrade with AutoUpgrade and Data Guard.

Prerequisites

The following is assumed to be already completed on both primary and standby hosts:

Install and patch a new 19c Oracle Database Home to the latest RU

Installing and patching a new 19c grid infrastructure to the latest RU

Upgrading the existing 12c grid into the new one (19c)

The steps

Stop Standby Database

Upgrade the primary DB

Run AutoUpgrade with the appropriate Config file [analyze, fixups, deploy]

After Upgrade

Restart Data Guard

Update the listener and /etc/oratab on the standby host.

Upgrade the DB by updating the Oracle Home information (srvctl upgrade database)

Re-enable Data Guard

Update RMAN catalog to new 19c client’s version

Reproduce the issue

After running AutoUpgrade Analyze to clear all warnings from the prechecks. The deploy unfortunately crashed.

The Configuration

- The Config file as shown below, can defer redo transport & stop Data Guard broker automatically if in use.

#Global configurations global.autoupg_log_dir=/u01/install/Autoupgrade/UPG_logs ################### # Database number 1 ################### upgd1.sid=PROD upgd1.source_home=/u01/app/oracle/product/12.2.0.1/dbhome_1 upgd1.target_home=/u01/app/oracle/product/19.0.0/dbhome_1 upg1.log_dir=/u01/install/Autoupgrade/UPG_logs/PROD upg1.run_utlrp=yes upg1.source_tns_admin_dir=/u01/app/oracle/product/12.2.0.1/dbhome_1/network/admin upg1.timezone_upg=yes upg1.restoration=yes

1. Autoupgrade analyze

C:\> java -jar autoupgrade.jar -config UP19_PROD.cfg -mode analyze

2. Autoupgrade deploy

The environment was ready for a go so I launched the deploy phase

C:\> java -jar autoupgrade.jar -config UP19_PROD.cfg -mode deploy

... An hour later

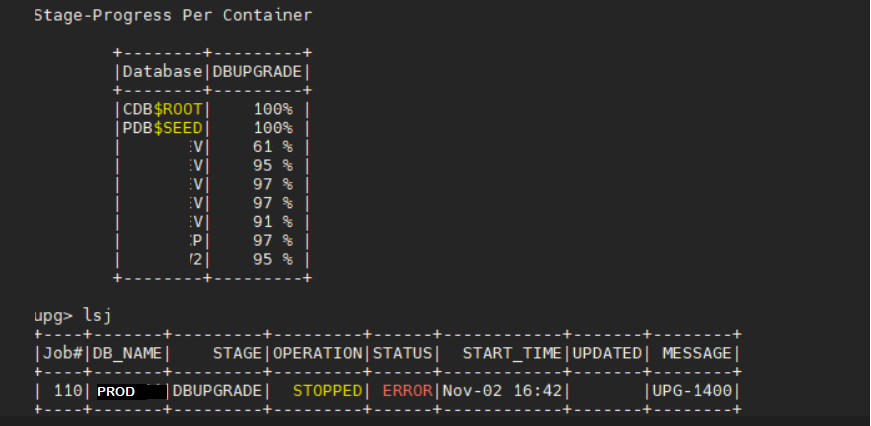

upg> lsj

+----+-------+---------+---------+-------+--------------+--------+------------+

|Job#|DB_NAME| STAGE |OPERATION| STATUS| START_TIME | UPDATED| MESSAGE |

+----+-------+---------+---------+-------+--------------+--------+------------+

| 110| PROD |DBUPGRADE|STOPPED | ERROR | Nov 02 16:42 | |UPG-1400 |

+----+-------+---------+---------+-------+--------------+--------+------------+

upg>

----------------------------------------------

Errors in database [PROD-MYPDB1] Stage [DBUPGRADE]

Operation [STOPPED] Status [ERROR]

Info [ Error: UPG-1400 UPGRADE FAILED [FSUAT]

Cause: Database upgrade failed with errors

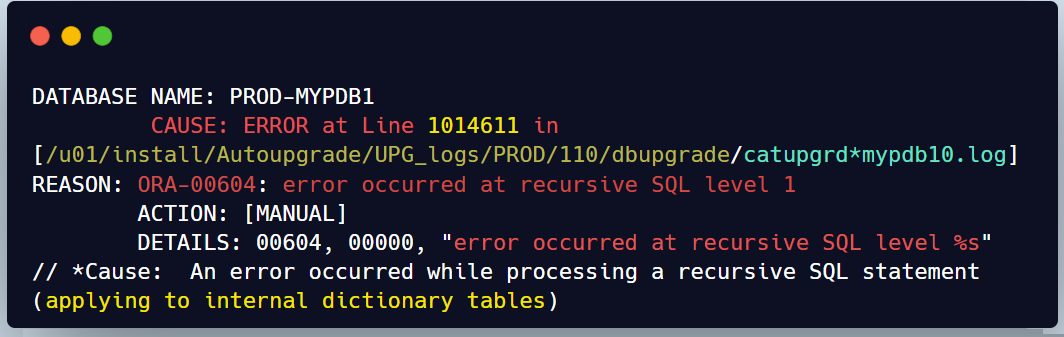

REASON: ORA-00604: error occurred at recursive SQL level 1

The OLAP Error

The upgrade phase never finished but most of the PDBs were having issues halfway through the upgrade (incomplete catalog)

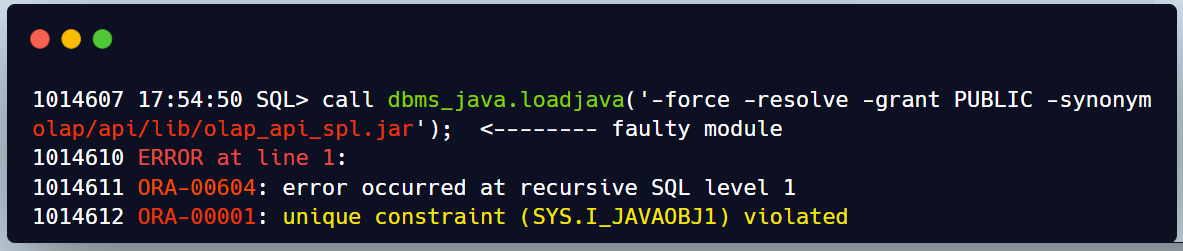

This is just an example for one of the error received by most of the PDBs in the source 12c CDB ORA-00604

When I check the line described in the error in catupgrd log file we can see the below excerpt

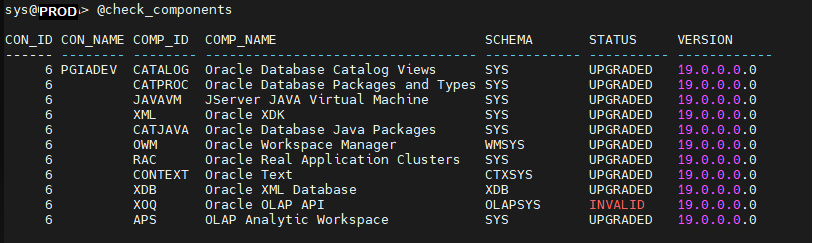

When we look at the status of the components on those impacted PDBs we can see that OLAP API is Invalid

Furthermore if we check the plugging database violations for these PDBs we find 2 OLAP culprits

NAME CAUSE MESSAGE STATUS -------- -------- ------------------------------------------------------- --------- MYPDB1 OPTION Database option APS mismatch:PDB installed version PENDING 19.0.0.0.0. CDB in stalled version NULL. MYPDB1 OPTION Database option XOQ mismatch: PDB installed version PENDING 19.0.0.0.0. CDB in stalled version NULL.

Explanation

Cause

This occurred because their previous upgrade from 11g to 12c didn't properly remove the deprecated 11g OLAP component from their PDBs after the conversion to multitenant. Refer to the preupgrade run note below.

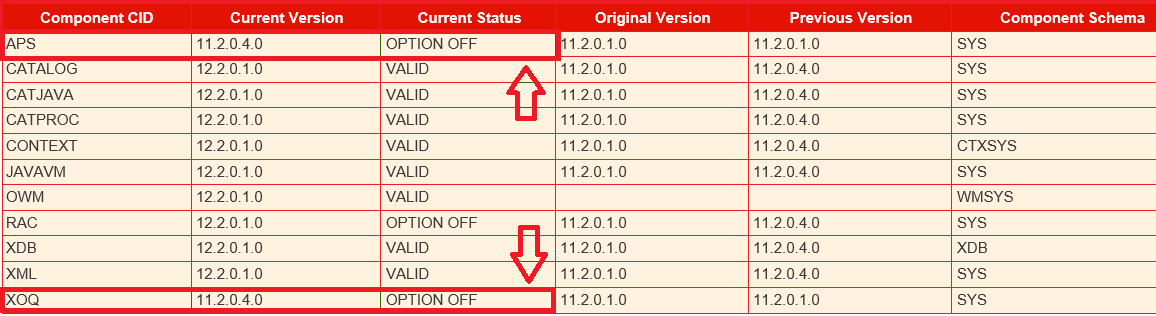

Indeed looking back at the Autoupgrade prechecks , we can notice that most PDBs have APS (Analytic Workspace)and XOQ (OLAP API) components that are there but market as ‘OPTION OFF’.

Expectation

This is where the AutoUpgrade should come in and flag these sort of issues as critical right from the early Analyze stage to help DBAs avoid upgrade crash during a production upgrade. Opening an SR is already a loss in terms of planned downtime.

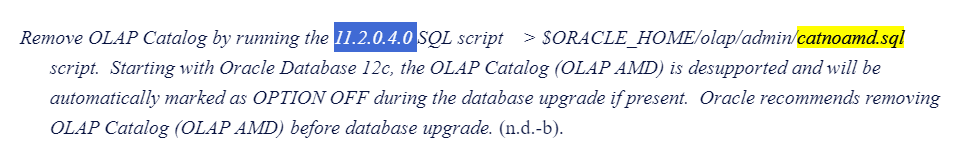

Solution

We’ll have to manually cleanup OLAP remnants before resuming the upgrade as described in Doc ID 1940098.1

I have gathered all the sequenced commands in 2 scripts olap_remove.sql along with remove_olap_leftovers.sql

$ vi olap_remove.sql

col name new_val pdb_name noprint

select name from v$pdbs;

spool &pdb_name..log

prompt ----> Remove OLAP Catalog

@?/olap/admin/catnoamd.sql

prompt ----> Remove OLAP API

@?/olap/admin/olapidrp.plb

@?/olap/admin/catnoxoq.sql

prompt ----> Deinstall APS - OLAP AW component

@?/olap/admin/catnoaps.sql

@?/olap/admin/cwm2drop.sql

prompt ----> cleanup leftovers and Recompile invalids

@remove_olap_leftovers.sql

@?/rdbms/admin/utlrp.sql

spool off

Run the cleanup script for each PDB

SQL> alter session set container=MYPDB1; @olap_remove

@remove_olap_leftovers.sql

alter session set container=MYPDB2; @olap_remove

@remove_olap_leftovers.sql------ Repeat for all PDBs

Checks

Once the removal performed , we should verify that is no conflicting OLAP issue is left in the environment

------ Repeat for all PDBs

1. The status of the components

SQL> select COMP_ID, COMP_NAME, VERSION, STATUS from dba_registry;

---- No OLAP component should be listed (Valid 19c options only)2. Confirm there is no violations remaining

SQL> SELECT name, cause, message,status

FROM pdb_plug_in_violations

where STATUS != 'RESOLVED' ORDER BY time;

Resume the job

upg> resume -job 110

After this the Autoupgrade completed successfully and the standby database was re-enabled as expected in the remaining steps.

CONCLUSION

While automation tools like AutoUpgrade are powerful, they can't predict and fix all potential bottlenecks.

Staying vigilant and utilizing troubleshooting skills remains crucial.

However, the integrated flagging of known issues would go a long way into improving the user experience of DBAs fostering greater adoption in the future.

Thanks for reading

Great intro! It's so true that automation isn't a magic bullet. Speaking of quickly seeing how code changes will look, have you ever used a Free online HTML viewer tool to preview HTML/CSS/JS snippets? It can be a real lifesaver for quick checks!

ReplyDelete